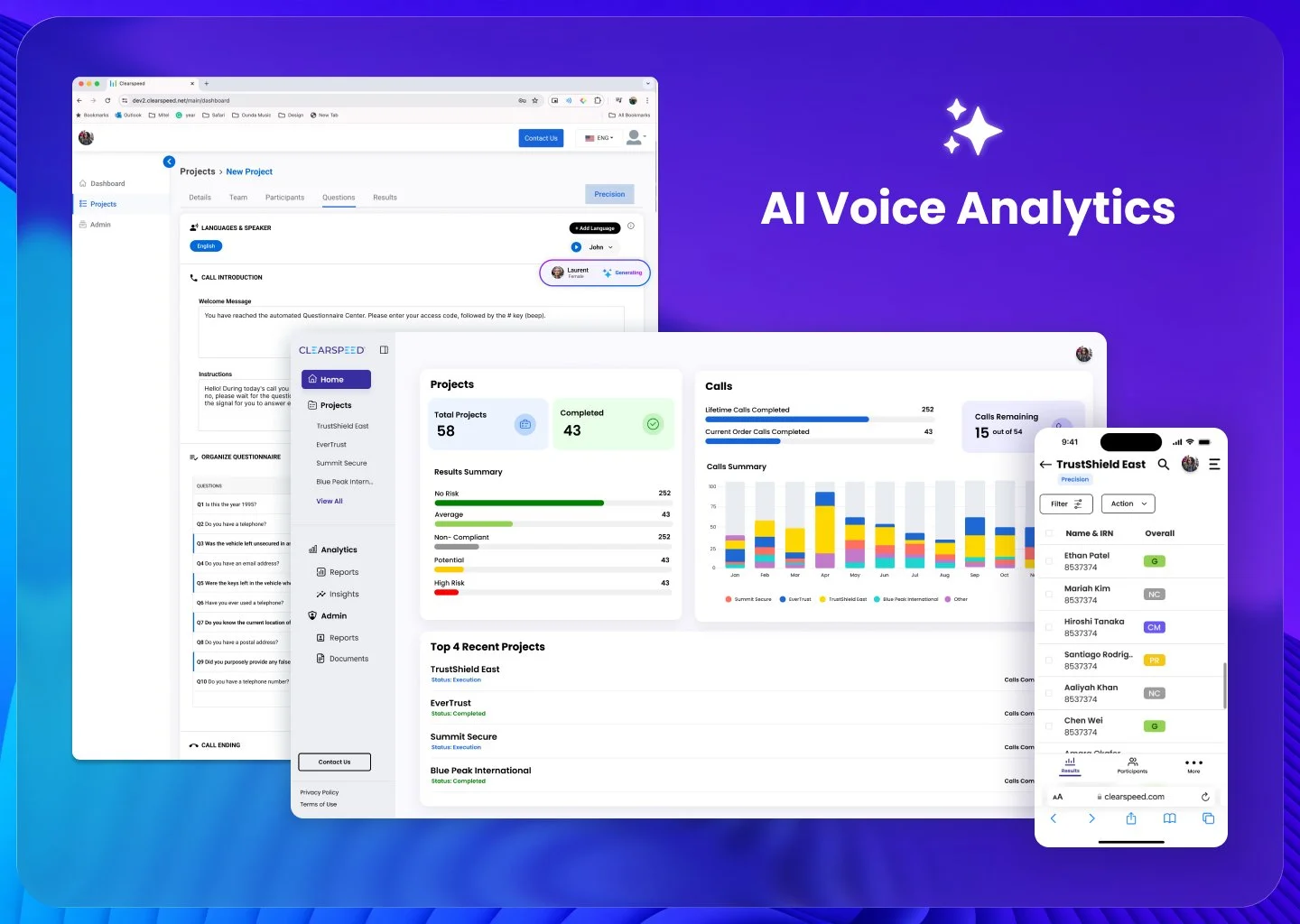

Transforming an AI Service Model into a Scalable Self-Service Enterprise Platform

ClearSpeed is an AI voice-analytics platform used by insurance and government organizations to assess fraud risk and prioritize claims.

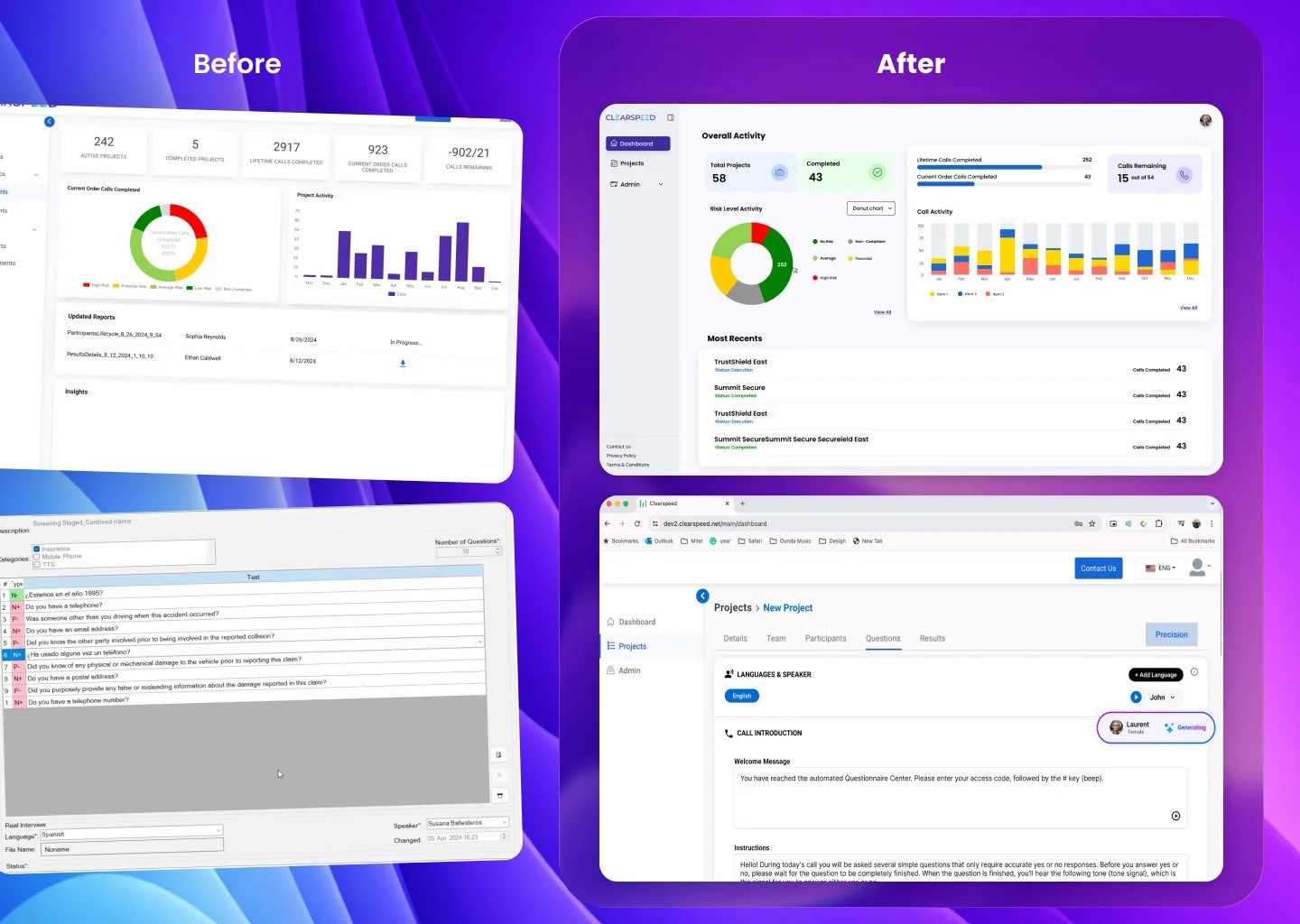

When I joined, the AI worked — but the product didn’t scale.

The platform depended on two internal operational teams to manually prepare, submit, interpret, and deliver AI analyses to clients.

As the sole product designer, I led the transformation from a human-dependent service workflow into a scalable self-service enterprise product.

Impact

Reduced operational dependency on internal teams

Shortened deployment and review cycles

Improved clarity of AI outputs

Increased client confidence and usability

Enabled scalable remote risk screening

My Role

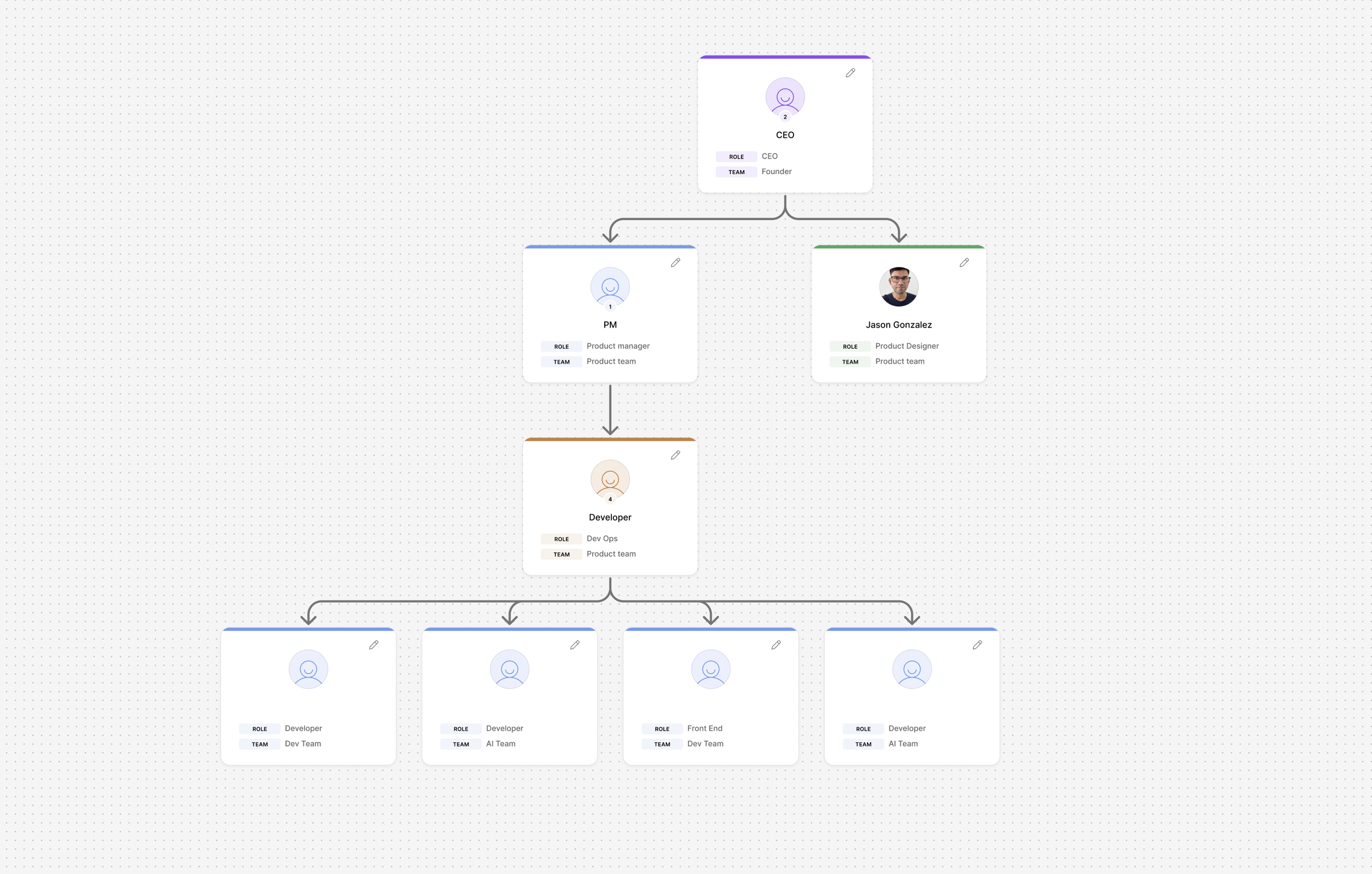

I was the sole product designer collaborating with five engineers, one project manager, and the company founder.

I owned the design process end-to-end:

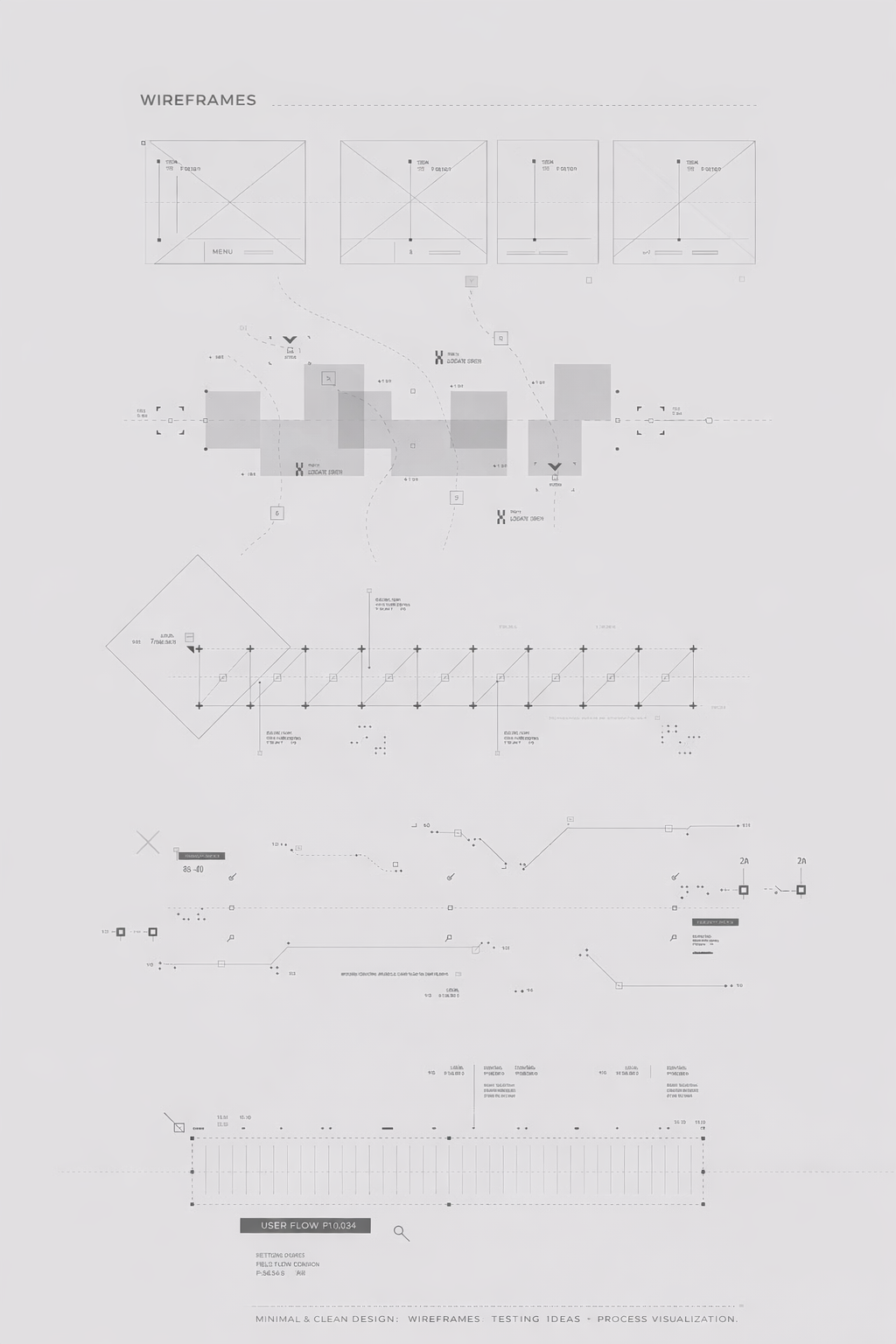

Research planning and execution

Stakeholder interviews (internal & clients)

Journey mapping and persona definition

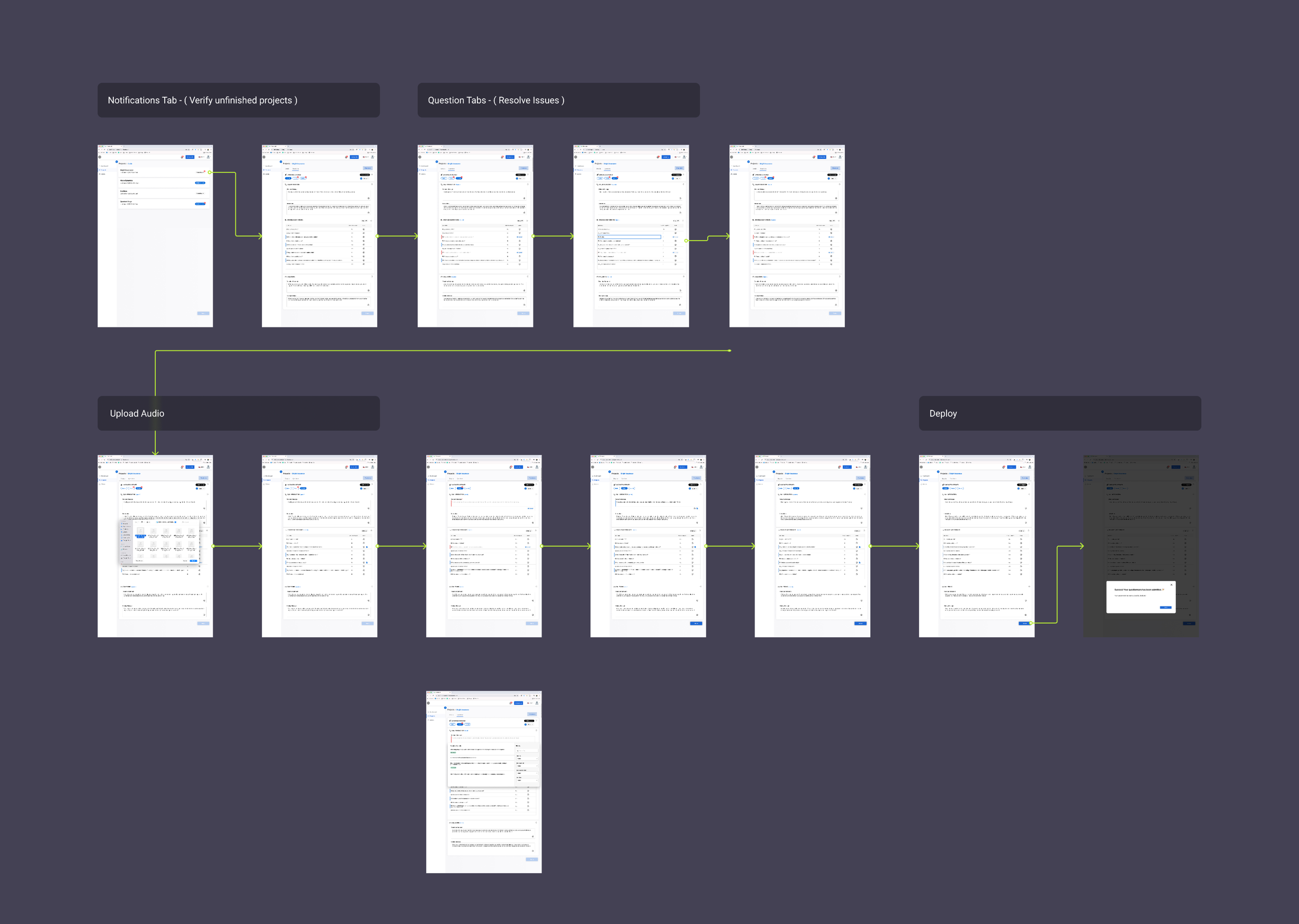

UX architecture and workflow redesign

Prototyping and usability testing

Final UI design

Engineering collaboration during implementation

Creation of the first scalable Figma component library

Responsive design (web-first, 40% mobile usage)

This was not just interface design — it was product restructuring.

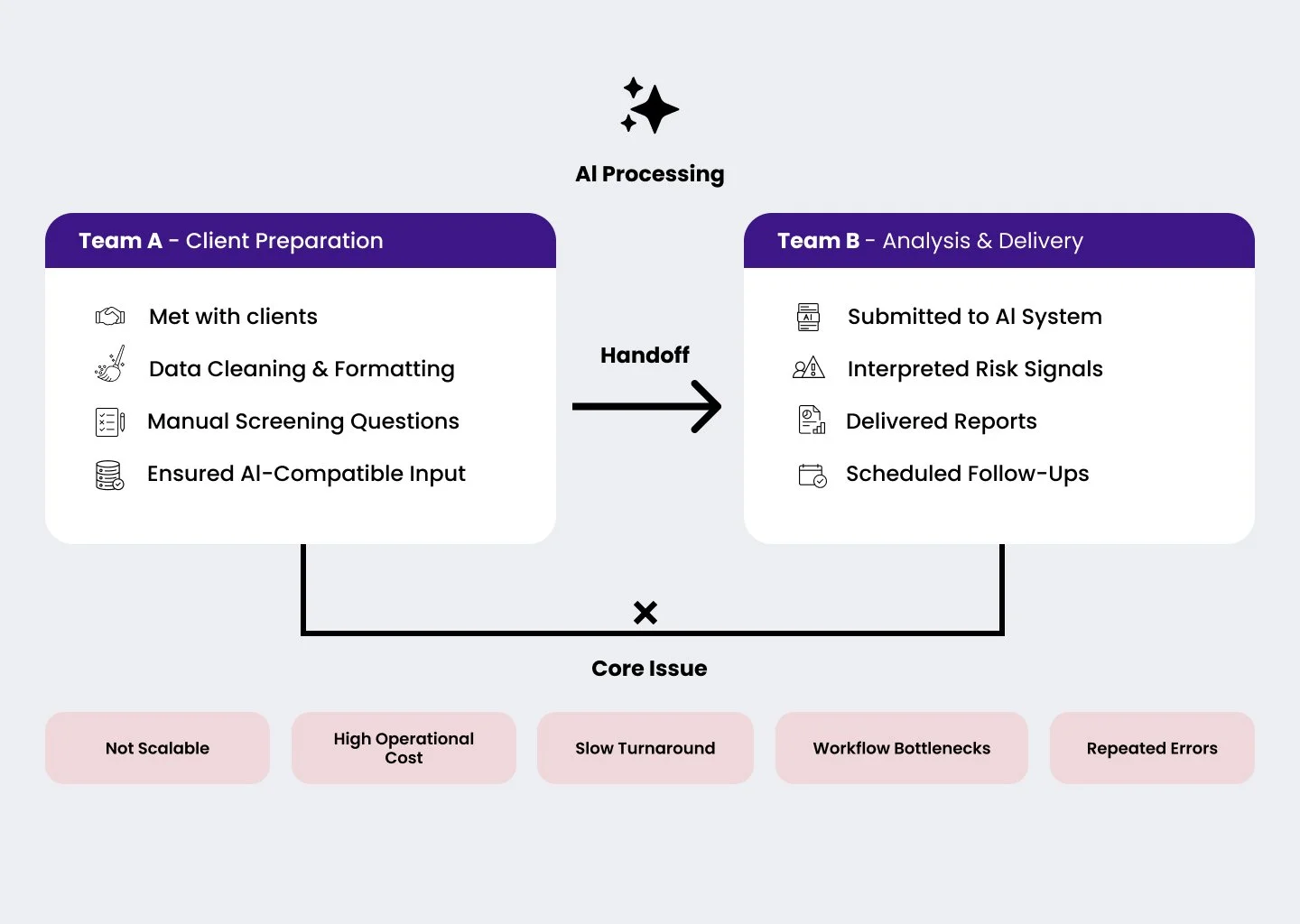

The Original Problem

In some cases, a single analysis required days of coordination.

ClearSpeed’s AI required two internal teams to operate. The AI functioned more like a service layer than a product.

Understanding the Real Friction

I started by mapping internal operations.

I interviewed both teams to analyze task breakdowns, time per phase, failure points, redo rates, cross-team dependencies, and regulatory constraints. Through this research, I discovered that end-to-end journeys often took days or even weeks to complete. Custom question creation required engineering intervention, creating additional bottlenecks. Clients were largely unaware of the internal operational complexity, and most delays were caused by structural workflow issues rather than limitations in the AI itself.

Then I shifted focus to clients.

Through weekly research sessions with active clients, I gathered:

Pain points

Confusion areas

Frustrations

Desired autonomy

Deployment expectations

This reshaped the entire product strategy.

A Critical Trade-Off: Custom Questions vs Pre-Written Intelligence

One major bottleneck stood out.

Most screening questions were pre-written and optimized for AI accuracy.

But when clients requested custom questions:

Engineering needed to write them

Internal validation was required

Deployment could take days or weeks

This slowed everything down.

My Design Decision

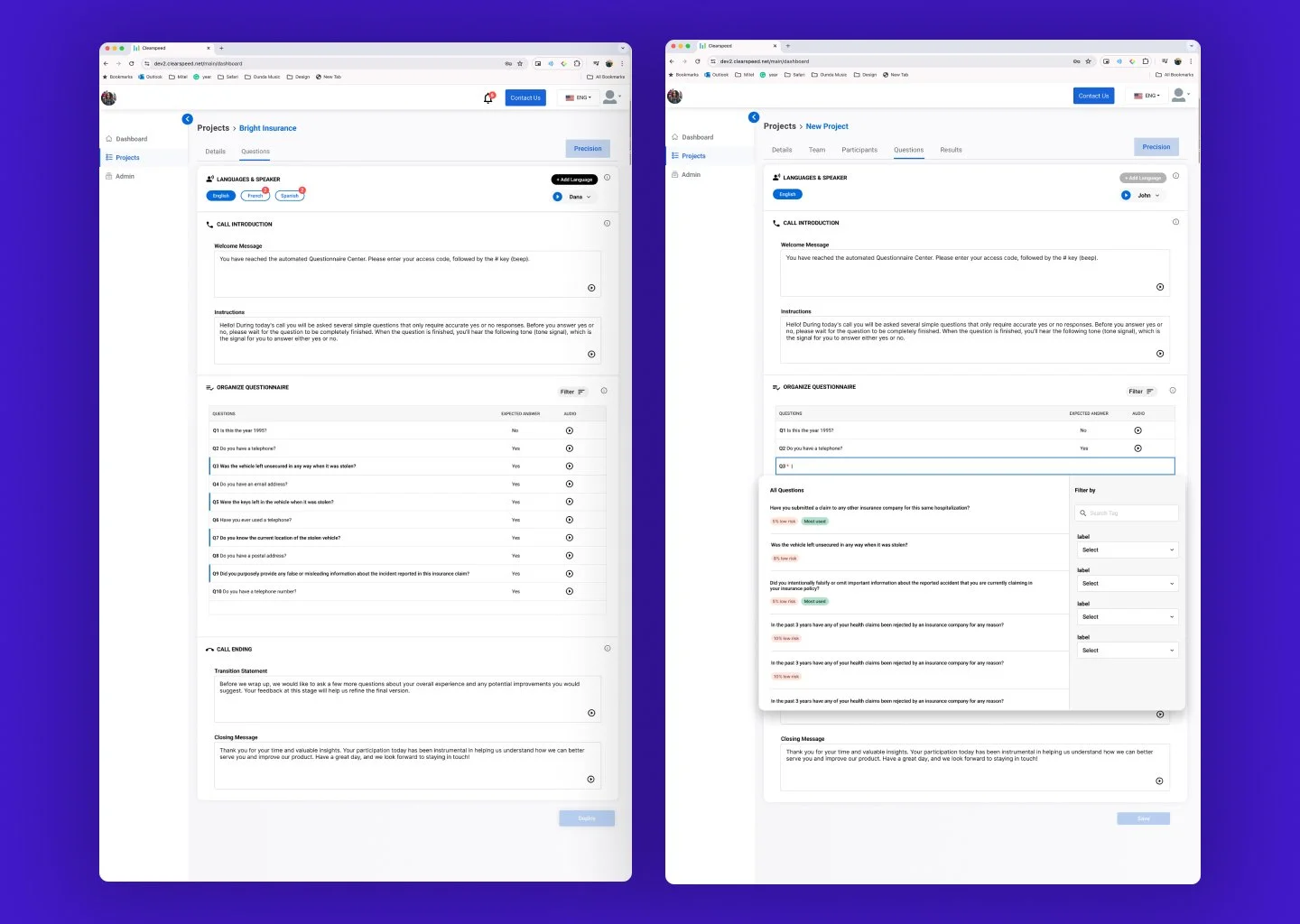

Instead of removing custom questions, I redesigned the system to guide users toward optimized pre-written questions while preserving flexibility.

I introduced:

A structured question library

A guided stepper that visually communicated deployment speed

Clear system messaging explaining review delays for custom entries

Leveraging the AI Audio System

To reinforce this behavior, I integrated our AI voice generation and audio library into the experience.

When users selected a pre-written question:

The system generated the voice output instantly

No review process was required

No waiting time was introduced

Users could immediately select their preferred AI voice

The final audio experience was delivered in real time

This created a strong sense of completion.

The user selected → generated → and received output instantly.

By contrast, when users entered a custom question:

The system clearly communicated that review was required

Processing time would be longer

Deployment would occur later

This established a clear “do it now” versus “do it later” behavioral pattern.

Behavioral Outcome

Users could instantly understand the trade-off:

Pre-written → Immediate deployment + instant AI voice generation

Custom → Review required + delayed deployment

In usability testing, most users preferred the structured pre-written library once they experienced the speed, immediacy, and completion feedback loop.

The design preserved freedom while naturally guiding users toward the optimized path through clarity, speed, and psychological reward.

Measurable Improvements

After launch:

Reduced internal team dependency

Shortened deployment timelines

Increased user completion rates

Improved satisfaction during onboarding

Reduced repeated test submissions

The AI moved from being service-operated to product-driven.

Mobile Expansion

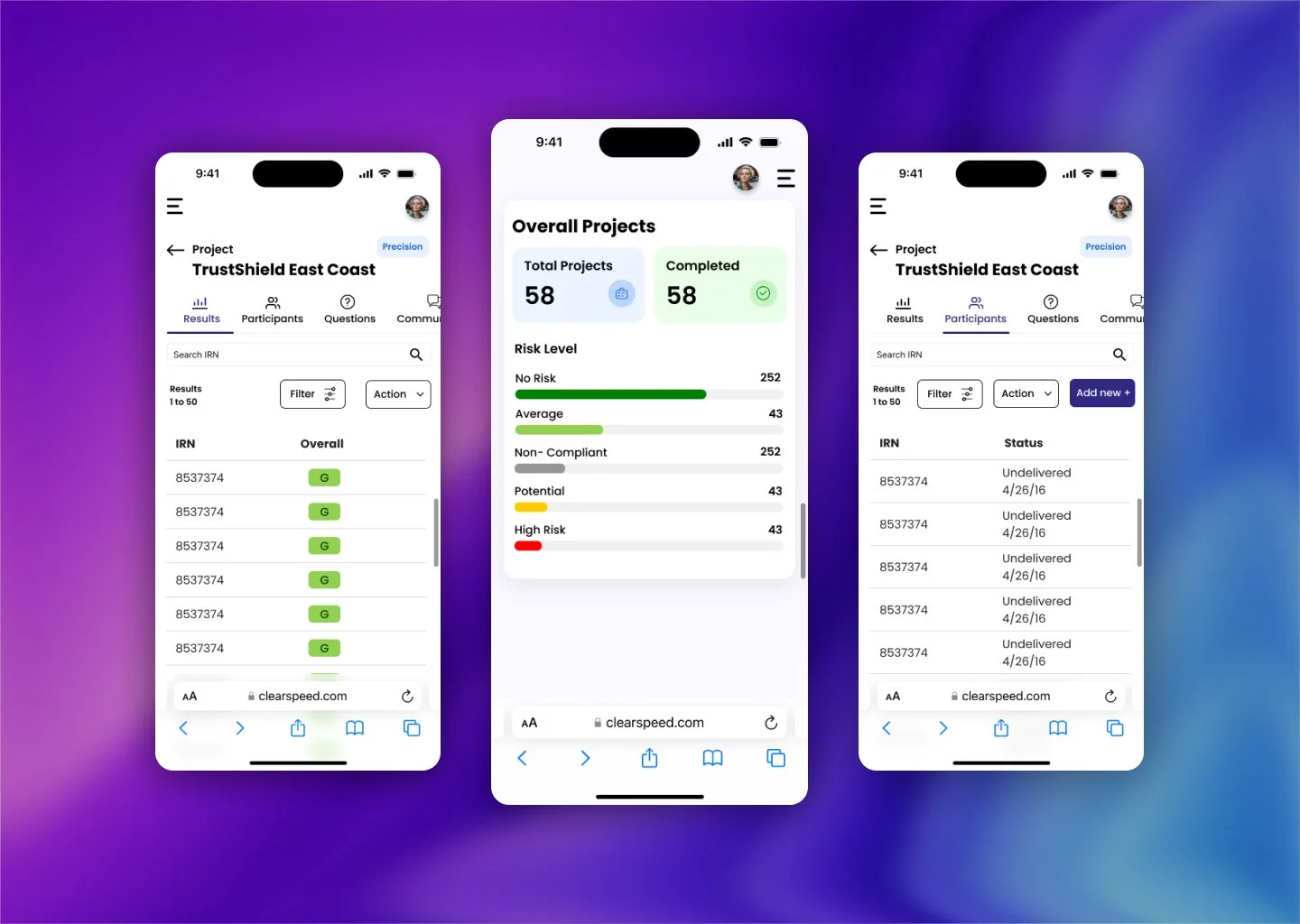

After stabilizing the web experience, I began translating workflows into responsive and mobile contexts.

Mobile required:

Tighter hierarchy

Reduced cognitive load

Simplified input flows

The mobile experience was launched successfully, though I did not complete its long-term evolution due to contract conclusion.

Reflection & Future Opportunities

If I were to evolve this product further, I would:

Simplify AI response interpretation even more

Introduce reusable deployment templates

Reduce call scheduling dependencies

Explore automation in validation layers

There is an opportunity to move from guided configuration to predictive configuration.